Abstract

Representing and rendering dynamic scenes has been an important but challenging task. Especially, to accurately model complex motions, high efficiency is usually hard to guarantee. To achieve real-time dynamic scene rendering while also enjoying high training and storage efficiency, we propose 4D Gaussian Splatting (4D-GS) as a holistic representation for dynamic scenes rather than applying 3D-GS for each individual frame. In 4D-GS, a novel explicit representation containing both 3D Gaussians and 4D neural voxels is proposed. A decomposed neural voxel encoding algorithm inspired by HexPlane is proposed to efficiently build Gaussian features from 4D neural voxels and then a lightweight MLP is applied to predict Gaussian deformations at novel timestamps. Our 4D-GS method achieves real-time rendering under high resolutions, 82 FPS at an 800 X 800 resolution on an RTX 3090 GPU while maintaining comparable or better quality than previous state-of-the-art methods.

Our method achieves real-time rendering for dynamic scenes at high image resolutions while maintaining high rendering quality. The right figure is mainly tested on synthetic datasets, where the radius of the dot corresponds to the training time. "Res": resolution.

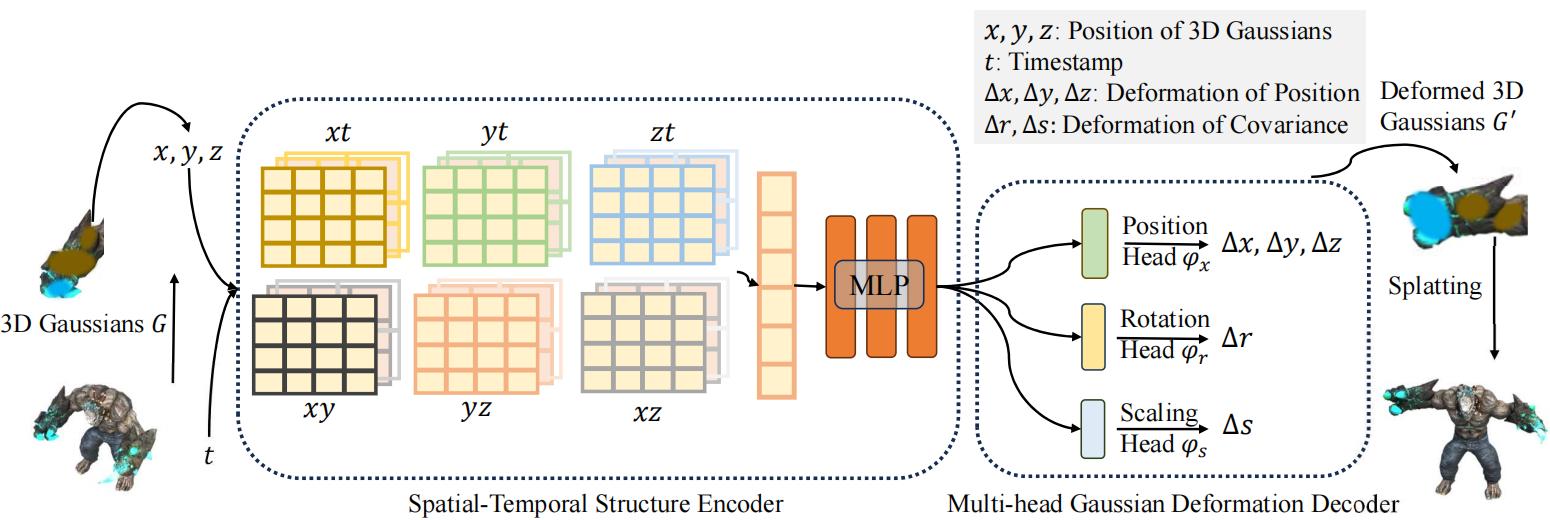

The overall pipeline of our model. Given a group of 3D Gaussians S, we extract the center of each 3D Gaussian X and timestamp t to compute the features by a spatial-temporal structure encoder. Then a multi-head Gaussian deformation decoder is used to decode the feature and get S` of each Gaussian at timestamp t.